The evolution of decentralized artificial intelligence in 2026 has transitioned from a theoretical possibility to a rigorous engineering discipline. The convergence of highly efficient Small Language Models (SLMs) and optimized inference kernels has enabled resource-constrained hardware, such as the Raspberry Pi 4B, to host sophisticated autonomous agent frameworks like thePopeBot. This research report investigates the optimal configuration of models, inference engines, and system-level parameters required to transform a quad-core ARMv8 platform into a reliable, 24/7 intelligence node.

The Paradigm Shift Toward Edge-Native Intelligence

The computational landscape of 2026 is defined by the strategic migration of large-scale language tasks to localized edge environments. This shift is driven by three primary structural forces: the exponential increase in model efficiency through architectural breakthroughs like Gated DeltaNet and Sparse Mixture of Experts (MoE), the necessity for data sovereignty in private automation, and the inherent latency constraints of cloud-based APIs for real-time agentic workflows. Enterprise AI workloads have seen a 40% migration to SLMs, as practitioners recognize that 80% of routine NLP tasks do not require the overhead of 70B+ parameter models.

The Raspberry Pi 4B, while superseded by newer iterations, remains a staple in the edge computing ecosystem due to its significant install base and well-documented architectural nuances. Equipped with a Broadcom BCM2711 SoC—comprising four Cortex-A72 cores—the Pi 4B offers a unique challenge: balancing the generous 8GB of LPDDR4 RAM against the relatively modest integer and floating-point throughput of the A72 architecture. Success in this domain is not achieved through raw power but through surgical optimization of the software stack and the selection of models that align with the specific instruction set capabilities of the ARMv8-A profile.

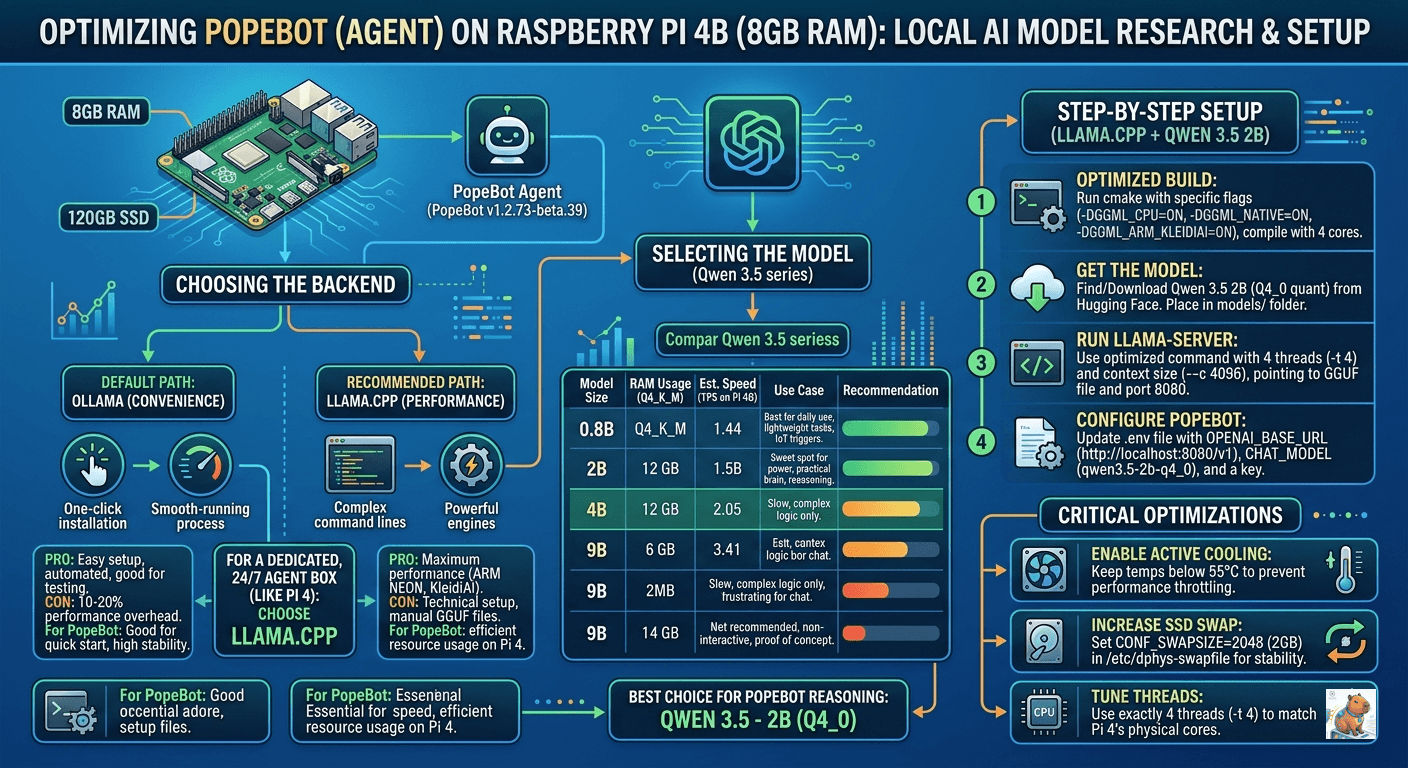

The Inference Engine Dichotomy: Ollama versus llama.cpp

For the deployment of autonomous agents, the choice of inference backend is the most critical architectural decision. In the 2026 ecosystem, this choice is represented by the tension between the abstraction and ease of use provided by Ollama and the granular, performance-oriented control of llama.cpp.

The Architecture of Ollama

Ollama has established itself as the “App Store” for local language models, primarily due to its Go-based wrapper that automates the complexities of model fetching, versioning, and environment configuration. For developers prioritizing rapid prototyping or standardized deployment across diverse workstations, Ollama provides a zero-friction path. It handles prompt templating automatically, ensuring that the nuances of various model families—such as the specific “thinking” tags of Qwen 3.5 or the instruction formats of Llama 4—are abstracted away from the end user.

However, this abstraction incurs a measurable cost on low-power hardware. Analysis of the Ollama runtime on ARM-based systems indicates a memory and compute overhead of approximately 10–20% compared to a direct C++ implementation. In a 24/7 autonomous environment like that required by thePopeBot, this overhead can translate to increased thermal pressure and a reduction in the available context window, as the Go runtime and its associated services consume precious CPU cycles and RAM that could otherwise be allocated to the model’s KV cache.

The Granularity of llama.cpp

Conversely, llama.cpp represents the “Inference Engine” in its purest form. As a low-level C++ implementation with minimal dependencies, it is designed to squeeze the maximum possible performance out of any given silicon. For the Raspberry Pi 4B, llama.cpp is not merely an alternative but often a necessity. It allows the practitioner to manually manage every thread, allocate specific memory regions, and leverage highly specialized micro-kernels like KleidiAI.

KleidiAI, developed by ARM, provides specialized kernels for matrix multiplication that are specifically tuned for the NEON SIMD instructions available on the Cortex-A72. By bypassing generic BLAS libraries, llama.cpp can achieve throughput gains of up to 70% in specific workloads, making the difference between a bot that responds in seconds and one that responds in minutes.

Technical Backend Comparison for Edge Deployment

| Feature | Ollama (Model Manager) | llama.cpp (Inference Engine) |

|---|---|---|

| Primary Goal | Effortless deployment and API serving | Peak performance and hardware control |

| System Overhead | 10–20% (due to Go wrapper) | Minimal (native C++ execution) |

| Model Format | Proprietary “layers” (Modelfiles) | Standard GGUF (from Hugging Face) |

| Integration | Built-in OpenAI-compatible API | API via llama-server binary |

| Hardware Optimization | Automated (often generic) | Manual (KleidiAI, NEON, OpenBLAS) |

| Suitability for Pi 4B | Recommended for initial testing | Required for 24/7 optimized agents |

The evidence suggests that while Ollama is the superior tool for discovery and short-term experimentation, the production-grade deployment of thePopeBot on a Pi 4B should gravitate toward a custom-built llama.cpp environment to ensure maximum stability and responsiveness.

Model Selection Analysis: Navigating the Qwen 3.5 and Gemma 3 Ecosystems

The “intelligence” of thePopeBot is a direct function of the SLM it employs. In 2026, the competitive landscape is dominated by the Qwen 3.5 series from Alibaba and the Gemma 3 family from Google. For the 8GB Pi 4B, the selection process must move beyond benchmark scores to evaluate “reasoning density”—the ability of a model to perform complex agentic tasks within a limited parameter budget.

Qwen 3.5: The Agentic Benchmark

The Qwen 3.5 family (released early 2026) represents a significant leap in “agent-native” design, is a highly capable, multimodal (text, image, video) open-weight model. It features a native 262k context window and a hybrid architecture mixing Gated Delta Networks (a linear attention variant) with a sparse Mixture-of-Experts (MoE).

The Qwen 3.5 - 2B model is the “Goldilocks” choice for the 8GB Raspberry Pi. It fits comfortably within the memory footprint, leaving ample room for thePopeBot’s Node.js environment (OS and orchestration frameworks) and a functional context window of up to 8k tokens. More importantly, the 2B model possesses the logical threshold required to adhere to JSON schemas and tool-calling protocols, which are the lifeblood of autonomous agents.

Because of the newer architecture, it punches significantly above its weight class. In many benchmarks, Qwen 3.5 2B matches or exceeds the logic of the older Qwen 2.5 7B while being 3.5x smaller (fewer active parameters).

Compared with Qwen 3.5 4B

- Generation (2.6 t/s vs 1.2 t/s): At 2.6 tokens per second, the 2B model feels like a human typing slowly but steadily. At 1.2 tokens per second, the 4B model feels like a computer that is struggling to breathe. Anything under 2 t/s will cause users to think the bot is dead.

- Prompt (8.1 t/s vs 3.2 t/s): This is the speed at which the Pi “reads” your input. If you send your agent a long thread of 500 words to analyze, the 2B model will start replying in 60 seconds. The 4B model would take nearly 3 minutes just to finish “reading.”

Gemma 3: Multimodality at the Edge

Gemma 3 introduces state-of-the-art performance in an ultra-lightweight package, with sizes ranging from 270M to 27B parameters. The 1B and 270M models are exceptional for high-throughput, low-latency triggers. The 270M model, in particular, is highly efficient, consuming minimal power while delivering nearly 23 tokens per second on ARM hardware.

However, for thePopeBot, which requires deep reasoning to manage complex jobs and git-based memory, the Gemma 3 1B model may occasionally struggle with instruction adherence compared to the Qwen 3.5 2B. If the user’s workflow requires processing visual data (e.g., analyzing screenshots or UI elements), the multimodal capabilities of Gemma 3 become the deciding factor, as it supports text and image input natively.

Performance and Feasibility Matrix for Pi 4B

| Model Identifier | Active Params | RAM (Quantized) | TPS (Est.) | Agent Suitability |

|---|---|---|---|---|

| Gemma 3 270M | 270M | ~200 MB | 20+ | Low (Router/Trigger only) |

| Qwen 3.5 0.8B | 800M | ~600 MB | 6.0–8.5 | Moderate (Simple tasks) |

| Gemma 3 1B | 1.1B | ~820 MB | 8.0–10.0 | Moderate (Instruction-heavy) |

| Qwen 3.5 2B | 2.1B | ~1.6 GB | 2.0–3.5 | High (Optimal for PopeBot) |

| Phi-4 Mini | 3.8B | ~2.5 GB | 1.0–1.8 | High (Slow reasoning) |

| Qwen 3.5 4B | 4.2B | ~3.2 GB | 0.8–1.2 | Experimental (Very slow) |

| Qwen 2.5 7B | 7.6B | ~4.7 GB | 2–4 | Tight. |

The throughput data confirms a diminishing return as parameter counts increase. While the 8GB RAM allows the system to host a 4B or even a 9B model, the resulting speed of less than 1 token per second is generally considered “non-interactive” and may cause timeout issues with thePopeBot’s Telegram or webhook integrations.

Compared with gemini-3.1-flash-lite-preview

Released in March 2026, Gemini 3.1 Flash-Lite Preview (gemini-3.1-flash-lite-preview) is Google’s workhorse cloud model. It is designed specifically for extremely high-volume, low-latency, and cost-sensitive API traffic.

Capabilities: It operates with a massive 1M token input context window and natively handles audio processing (up to 8.4 hours), which Qwen 3.5 2B does not. It also includes 4 configurable levels of internal “thinking” before outputting a response, allowing for much more complex routing and multi-tool calling.

Architecture & Cost: Because it is a proprietary cloud model, the parameter count is vastly larger than 2B, giving it a much higher baseline intelligence. However, it costs money: 1.50 per 1 million output tokens.

The Mathematics of Quantization: Precision versus Efficiency

Quantization is the essential technology that enables local LLM execution on the edge. It involves mapping high-precision weights (FP16 or BF16) to lower-bit integer representations (Int8, Int4, etc.). For the ARMv8 architecture of the Pi 4B, the choice of quantization format is not merely about size but about instruction alignment.

The formula for memory reduction through quantization is linear:

However, the performance impact is non-linear. In 2026, the Q4_K_S format remains the preferred choice for ARM CPUs because its 4-bit blocks align perfectly with 128-bit NEON SIMD registers, allowing for high-speed parallel dequantization and multiplication. While newer “Importance Matrix” (imatrix) quants like IQ4_XS offer superior intelligence by allocating more bits to critical tensors, they introduce additional logic that can slow down the already compute-constrained Cortex-A72.

Q4_0 (Legacy Quantization):

The Math: This uses the older “type-0” quantization. It takes the model’s weights and crushes them down to 4 bits uniformly using a very simple scale: (where is the block scale and is the quant).

The Reality: It is technically the fastest for prefill, but it causes significant “brain damage” to the model. You get high perplexity loss. When you are trying to run autonomous agents that require strict logic, structured JSON outputs, or philosophical reasoning, q4_0 often hallucinates, gets stuck in loops, or loses the plot. It is largely considered obsolete.

Q4_K_S (Modern K-Quantization):

The Math: The “K” stands for k-quants, and “S” for Small. Instead of a flat 4-bit reduction, k-quants use superblocks with quantized sub-block scales. It intelligently mixes bit depths so the most critical neural pathways retain higher precision while the less important ones are compressed.

The Reality: This is the strictly superior choice for a local deployment. It results in a file size virtually identical to q4_0 (1.5 to 1.6 GB), keeping your VRAM/RAM footprint minimal, but dramatically preserves the model’s actual intelligence. If an edge agent needs to write code, parse CLI commands, or execute GitOps workflows autonomously, Q4_K_S maintains the coherence necessary to do so without looping.

Head-to-Head Architectural Fit

To understand which to choose, we have to look at how these models behave when deployed as sovereign economic actors or autonomous agents.

| Feature | Qwen 3.5 2B Q4_0 | Qwen 3.5 2B Q4_K_S | Gemini 3.1 Flash-Lite Preview |

|---|---|---|---|

| Compute Environment | 100% Local / Edge Compute | 100% Local / Edge Compute | Cloud-dependent (API) |

| Hardware Required | Very Low (Runs smoothly on 8GB RAM) | Very Low (Runs smoothly on 8GB RAM) | Negligible (Just needs network I/O) |

| Digital Sovereignty | Absolute. Cannot be censored or turned off by a central corporation. | Absolute. Your hardware, your rules. | Zero. Subject to corporate API terms, rate limits, and outages. |

| Agent Metabolism (Cost) | Free (Only electricity cost) | Free (Only electricity cost) | Paid per token |

| Intelligence / Logic | Degraded. Prone to loops. | High for its size. Follows strict prompts well. | Frontier-level for lightweight models. Highly capable tool caller. |

| Context Window | 262,144 tokens | 262,144 tokens | 1,048,576 tokens |

The Verdict

- Drop

q4_0immediately. It leverages ARM NEON instructions most effectively and provides the highest tokens-per-second. But there is no modern use case where the legacy 4-bit quantization makes sense whenQ4_K_Sexists at the exact same file size. The perplexity loss ofq4_0will break any complex autonomous workflow. - Choose Qwen 3.5 2B

Q4_K_S. These formats use non-linear quantization to preserve model quality, making them better for complex logic in thePopeBot, provided the user can tolerate a 10–15% speed reduction. If you are building digital entities that need to live permanently on edge devices, this model guarantees that no central authority can shut down your agent. It relies entirely on the local silicon, making it a perfect brain for a decentralized, self-sustaining bot. - Choose Gemini 3.1 Flash-Lite Preview if you need sheer capability and can afford the API dependency. If your agent requires heavy data extraction, audio transcription, or massive 1M token context windows—and you don’t mind the bot having a financial “metabolism” where it must pay Google for its thoughts—this model is significantly smarter and faster out of the box.

System Optimization: Thermodynamics and Power Delivery

The primary threat to 24/7 stability on a Raspberry Pi 4B is heat. LLM inference utilizes all available CPU resources, which can drive temperatures to the 80°C thermal throttling limit in less than a minute without active cooling.

Thermal Management Strategies

The 2026 standard for high-performance Pi servers involves a multi-layered thermal strategy. Passive heatsinks are insufficient for sustained LLM workloads. An active cooling solution—such as the official Raspberry Pi fan or a tower-style cooler—is required to maintain temperatures below 65°C.

| Cooler Type | Idle Temp | Sustained Inference Temp | Throttling Risk |

|---|---|---|---|

| No Heatsink | 60°C | 85°C+ | Immediate |

| Small Heatsink (Passive) | 55°C | 80°C | High |

| Official Case Fan | 45°C | 68°C | Low |

| ICE Tower / Active Cooler | 38°C | 55°C | Negligible |

Maintaining a constant temperature is also beneficial for the system’s clock stability. Fluctuations in temperature can cause the crystal oscillator frequency to drift, impacting time-sensitive tasks like NTP synchronization and certain cryptography-heavy job summaries in thePopeBot.

Power Integrity

The use of an SSD and a high-speed fan increases the power draw of the Pi. To prevent undervoltage warnings—which can cause the CPU to lock in a low-frequency state—the use of the official 15W or 27W USB-C power supply is mandatory. Cheap phone chargers lack the voltage stability required for the peak current spikes seen during model loading and inference.

Memory Engineering: ZRAM and SSD Swap

With 8GB of RAM, the Pi 4B is well-equipped, but thePopeBot’s reliance on Node.js and SQLite means that RAM can become fragmented over time. The “silent killer” of edge AI is the Out-of-Memory (OOM) crash.

Implementing ZRAM

ZRAM is a kernel module that creates a compressed block device in RAM, essentially acting as a fast, in-memory swap partition. For the 8GB Pi, allocating 2GB to 4GB of ZRAM provides a significant buffer. Since the text and weights handled by LLMs are highly compressible, ZRAM can effectively expand the system’s capacity to handle spikes in memory usage without hitting the disk.

# Installation and configuration of ZRAM

sudo apt install zram-tools

# Edit /etc/default/zramswap to set size:

# PERCENTAGE=50SSD-Backed Swap File

The 120GB SSD is the user’s greatest asset for long-term stability. By default, Raspberry Pi OS sets a 100MB swap file on the SD card, which is both slow and prone to failure. On an SSD, the user should increase this to 2GB or 4GB. This acts as a final safety net; if ZRAM is exhausted, the system will move background processes to the SSD rather than killing the LLM server or thePopeBot process. Refer Expanding Your “Virtual” RAM.

The Build Process: Leveraging KleidiAI and ARM NEON

To achieve the 70% performance gains seen in 2026 benchmarks, the user must compile llama.cpp from source specifically for the Pi 4B’s Cortex-A72 architecture.

Optimization Flags and Their Significance

-DGGML_NATIVE=ON: Instructs the compiler to use every instruction available on the host CPU, specifically NEON SIMD instructions for parallel math.-DGGML_ARM_KLEIDIAI=ON: This is the crucial 2026 optimization. KleidiAI provides highly efficient micro-kernels for matrix-vector multiplication, which are the fundamental operations in LLM inference.-DGGML_OPENBLAS=ON: Links against OpenBLAS, which provides a faster linear algebra backend than the standard C++ libraries for certain model sizes.

Recommended Build Command

# Install optimized dependencies

sudo apt update && sudo apt install -y git cmake build-essential libopenblas-dev

# Clone and compile

git clone https://github.com/ggerganov/llama.cpp

cd llama.cpp

cmake -B build -DGGML_NATIVE=ON -DGGML_ARM_KLEIDIAI=ON -DGGML_OPENBLAS=ON -DCMAKE_BUILD_TYPE=Release

cmake --build build --config Release -j4Using -j4 ensures that all four cores are utilized during the compilation process, significantly reducing build time.

Operational Reliability: 24/7 Stability for the Edge

Autonomous agents are intended to be “set it and forget it” systems. Ensuring the Pi 4B survives weeks or months of continuous operation requires proactive system maintenance.

Storage Longevity and File Systems

The move to an SSD eliminates the primary cause of Pi failure: SD card corruption. To further protect the system, the practitioner should enable the noatime mount option in /etc/fstab, which prevents the system from writing a timestamp every time a file is read, thus reducing unnecessary write cycles. Additionally, the use of log2ram caches log files in memory, preventing the constant write cycles of thePopeBot’s debug logs from wearing down the SSD.

Hardware Watchdog and Recovery

The Pi 4B features a hardware watchdog that can automatically reboot the system if it hangs. This is essential for 24/7 agents; if a rare memory leak or kernel panic occurs, the watchdog ensures the agent returns to service without human intervention.

# Configuration in /etc/systemd/system.conf

RuntimeWatchdogSec=15For remote management, the deployment of a lightweight monitoring tool like Uptime Kuma or a simple Telegram status bot is recommended to track the health and response times of the local model.

Performance Benchmarking: Real-World Expectations

When the system is fully optimized, the user can expect the following performance metrics for the Qwen 3.5 - 2B model on the Raspberry Pi 4B:

| Metric | Estimated Value |

|---|---|

| Cold Start Time | 4–7 seconds (loading from SSD) |

| Prompt Processing | 15–25 tokens/second (using NEON) |

| Token Generation | 2.5–3.5 tokens/second |

| Average Response Lag | 5–12 seconds (initial thought processing) |

| Full Job Completion | 45–90 seconds (multi-step agentic cycle) |

While 3 tokens per second may seem slow compared to a desktop GPU, it is faster than the average human reading speed and is more than sufficient for an autonomous agent working in the background to summarize news, manage calendars, or respond to messages.

Deployment Checklist: Pre-Flight Requirements

Before initiating the first run of thePopeBot with a local SLM, the user should verify the following system state:

- Memory: Ensure

free -hshows at least 1.5GB of available RAM before the model is loaded. - Storage: Verify the SSD has at least 5GB of free space for models and SQLite database growth.

- Swap: Confirm ZRAM is active and the swap file on the SSD is at least 2GB.

- Firmware: Run

sudo rpi-eeprom-updateto ensure the Pi is using the latest bootloader optimizations for the USB 3.0 controller and thermal management. - Thermals: Check that

vcgencmd measure_tempreports an idle temperature below 50°C.

Conclusion: A Strategy for Edge Autonomy

The convergence of the Raspberry Pi 4B, thePopeBot framework, and the Qwen 3.5 model family provides a potent blueprint for localized intelligence. By eschewing the overhead of high-level wrappers in favor of an optimized llama.cpp build, the user can maximize the limited throughput of the Cortex-A72 architecture. The implementation of ZRAM and SSD-backed swap creates a resilient memory environment, while active cooling ensures that the system can handle the relentless demands of LLM inference without degradation.

The Qwen 3.5 2B model stands out as the superior choice for this specific hardware, offering the requisite reasoning density for agentic workflows while remaining within the performance envelope of the Pi 4B. Through meticulous system-level tuning and the strategic use of ARM-specific micro-kernels, the Raspberry Pi ceases to be a mere hobbyist board and becomes a sophisticated, autonomous edge node capable of delivering private, low-latency AI assistance in a 24/7 capacity.