It is 2026. If you walk into a room of engineers today and announce you’re starting a new web app, they immediately assume you’re orchestrating an AI backend in Python, writing high-concurrency microservices in Rust, or wrestling with React Server Components in Next.js.

When I tell them my core stack runs on Meteor + Blaze, the usual reaction is a polite, confused nod. Meteor is a classic. It’s a “legacy-modern” framework that cleanly migrated to an async architecture in the 3.x era, but it is rarely the darling of the modern component economy.

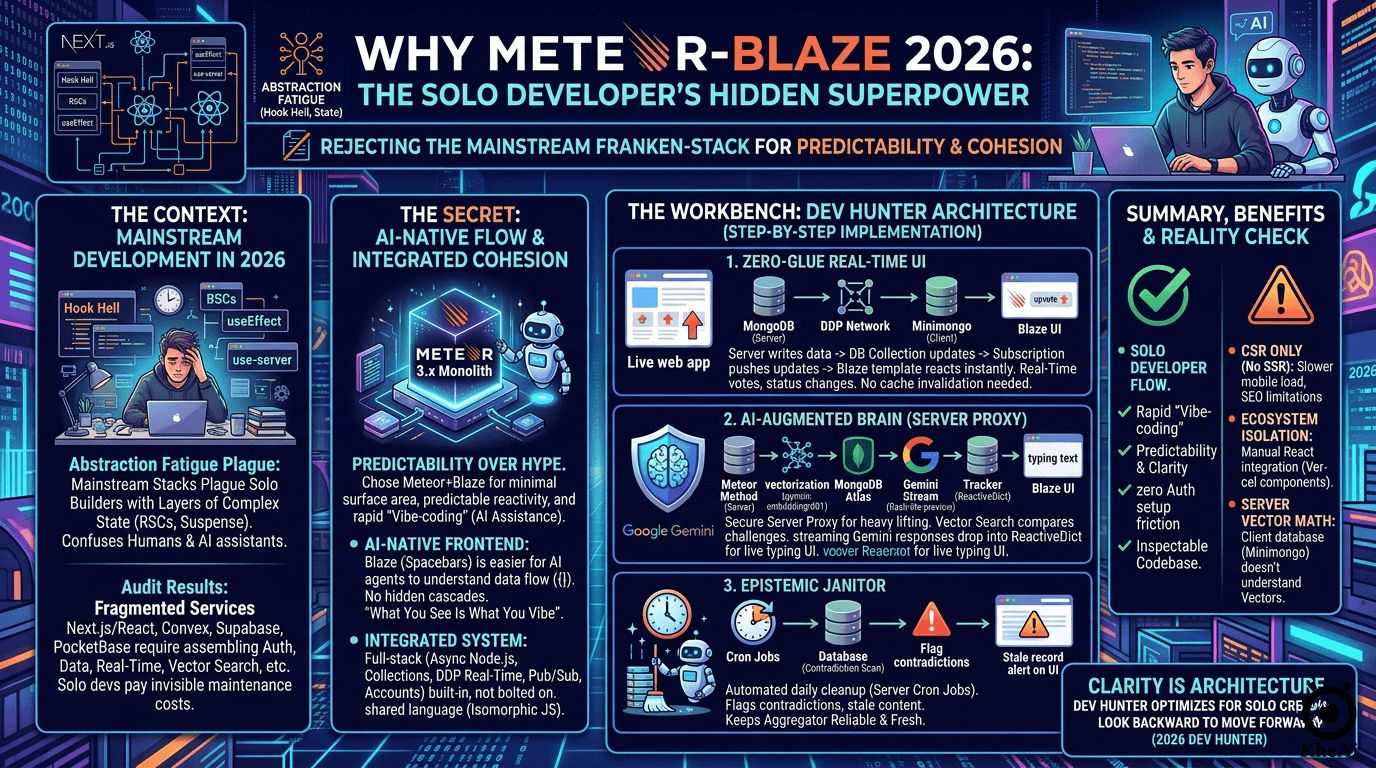

I am building Dev Hunter—a Reddit-style aggregator designed to collect, track, and analyze online-only, solo developer challenges with cash prizes under $2k. Building a full-stack, AI-integrated monolith from scratch is a significant undertaking. When I audited the modern ecosystem, I realized mainstream development is currently plagued by abstraction fatigue—layers of complex state management that confuse both human developers and AI coding assistants.

So, I rejected the modern “Franken-stack.”

After heavily auditing the trade-offs, I found that pairing a 2012-era UI paradigm with a 2026 AI brain is actually a solo developer’s secret superpower. Dev Hunter requires rapid “vibe-coding” (using AI to generate logic), real-time state synchronization, seamless deployment, and—most importantly—an architecture that is easy for me to independently verify and maintain.

Here is my deep-dive architectural audit of why I chose predictability over hype, and exactly how you can replicate this setup.

Part 1: Trading Hype for Predictability

Dev Hunter is a real-time aggregator: it needs fast updates, low operational friction, AI-assisted analysis, and enough structure to stay sane as the product grows. The core question was not, “What is the most fashionable stack?” It was, “What stack gives me the fewest moving parts while still supporting real-time state, clean server logic, and AI workflows?”

That is why the evaluation should be framed around a few concrete requirements:

- Real-time updates for upvotes, countdowns, and live challenge status.

- A database model that fits short-form challenge data and later analysis.

- A path for AI-generated summaries, embeddings, and strategy matching.

- Minimal glue code between the frontend and the backend.

- A deployment story that does not turn the project into a platform-integration exercise.

Most modern app stacks solve power by adding layers. You get one service for auth, another for data, another for real-time updates, another for vector search, another for deployment, and another for frontend state. That can be a valid trade-off for a massive enterprise team. For a solo builder, it is the primary source of friction. You spend more time configuring CORS and aligning TypeScript schemas than building your product.

Meteor + Blaze

Meteor is the closest thing in this group to an integrated full-stack operating model. Its docs emphasize collections, methods, publications/subscriptions, DDP, and built-in accounts, which means the real-time pipeline is part of the framework rather than something bolted on later. Blaze is Meteor’s built-in reactive rendering layer, with Spacebars templates and Tracker-driven updates. That gives you a compact mental model: server writes data, publications push it, templates update.

The main advantage is cohesion. The main limitation is ecosystem breadth. You are choosing a smaller, more opinionated universe in exchange for fewer seams.

Next.js + React

Next.js (with React) is the undisputed king of modern web development, and for good reason. Its ecosystem gravity is unmatched, and the framework has evolved far beyond a simple request/response wrapper. With the App Router, it offers a sophisticated model of Server Components, Server Actions, and advanced caching semantics. It is a powerful system built for scale and high-performance streaming.

However, for a solo developer, this power introduces significant architectural fragmentation. React is no longer just a UI library; it is now a complex orchestration layer of use-client vs. use-server directives and Suspense boundaries. When I mapped my requirements to this stack, the friction was immediate. Because Next.js remains fundamentally request/response-based, it falls short on native, out-of-the-box WebSockets. To get the live upvotes Dev Hunter requires, I would have to duct-tape third-party services together, adding the exact “abstraction fatigue” I was trying to avoid.

Convex

Convex is the strongest “modern Meteor-like” alternative in the list. Its platform centers on reactive queries, server functions, and a database that updates the UI automatically when underlying data changes. Convex also publishes an open-source, self-hostable model, which reduces some lock-in concerns compared with a purely hosted platform.

Its appeal is simple: less glue, less manual synchronization, fewer cache invalidation problems. Its tradeoff is that you are adopting Convex’s own runtime and data model instead of the broader conventions of the general web ecosystem.

Supabase

Supabase is best understood as a Postgres platform with several integrated services: Auth, Realtime, Storage, and vector tooling. Its Realtime system supports database-related subscriptions and presence-style capabilities, while its auth stack covers standard sign-in flows. For AI-heavy applications, the ecosystem around Postgres and pgvector is a serious advantage.

The tradeoff is not capability; it is fit. Supabase is excellent when a Postgres-first design is acceptable. It is less natural if your architecture is already centered on MongoDB habits and you want to preserve that data model.

FeathersJS

FeathersJS is a lightweight real-time API framework, not an all-in-one app environment. It is designed around services, works with multiple transports, and supports MongoDB through official database adapters. That makes it one of the best choices when the goal is portability and realtime behavior without committing to a proprietary backend platform.

The advantage is flexibility. The cost is that you still have to assemble more of the system yourself than you would with Meteor, Convex, or Supabase.

PocketBase

PocketBase is the simplest “one binary, one service” option in the set. It is open source, uses embedded SQLite, and includes auth, file storage, and realtime subscriptions. For a small product or an MVP, its deployment model is extremely attractive.

Its weakness is scale shape, not usability. It is intentionally compact. That is a strength for a quick launch, but it is not the same kind of long-term platform story as a fuller backend framework.

The Clean Decision Matrix

Before writing a single line of code, I evaluated the contenders based on my strict requirements: real-time updates, document DB fit, AI pipeline capabilities, minimal glue code, and deployment hygiene.

| Stack | The Architecture | Real-Time Model | Data & AI Fit | Setup Friction | The Verdict |

|---|---|---|---|---|---|

| Meteor + Blaze | Integrated full-stack framework + reactive UI | Built-in DDP, pub/sub, Tracker | Excellent for Mongo workflows; AI assembled manually | Low | Winner. The most cohesive “one system” experience. |

| Next.js + React | UI framework + rendering model | Needs external real-time layer | High ecosystem support, but fractured data layers | High | Great for ecosystem scale, terrible for solo dev glue-code. |

| Convex | Reactive backend/database platform | Native reactive queries | Strong for live updates; AI is platform-specific | Low | Great Meteor-like modern alternative, but locks you into their runtime. |

| Supabase | Postgres backend platform | WebSockets via subscriptions | Excellent pgvector AI support | Medium | Powerful, but forces a shift from NoSQL (MongoDB) to SQL. |

| FeathersJS | Real-time API framework | Service events + WebSockets | Strong for MongoDB | Medium | Highly portable, but requires manual assembly. |

| PocketBase | Lightweight backend in one binary | Built-in subscriptions | Basic AI integration; limited scale | Very Low | Brilliant for fast MVPs, lacks heavy backend scaling. |

If the goal is to minimize architectural surface area, Meteor + Blaze is still the most coherent option. It gives you real-time behavior, a direct server-to-UI feedback loop, and minimal conceptual overhead.

Part 2: Why Meteor-Blaze is AI-Native

We have reached a complexity ceiling in 2026. Mainstream frameworks have become so abstracted that maintaining them—even with AI coding assistants—is a chore. When an AI agent tries to debug a modern React app, it frequently hallucinates trying to navigate “Hook Hell” or complex state hydration because the context window required to understand the architecture is massive.

I took a second look at Meteor. With the release of the 3.x architecture, it quietly dropped its legacy technical debt (Fibers), fully embraced standard async/await Node.js, and modernized its build tools.

More importantly, Blaze is the ultimate AI-Native frontend. Blaze uses “Spacebars”—a straightforward, flat, Handlebars-like logic format. When an AI reads a Blaze template ({{#if}}, {{#each}}) alongside a Meteor Method, it instantly understands the data flow. There are no hidden useEffect cascades. It is “What You See Is What You Vibe.” Because Meteor is isomorphic, the frontend and backend share the exact same JavaScript language, making it incredibly easy to maintain and verify manually or via AI chats.

Part 3: The Dev Hunter Workbench

To build this “Frankenstein Masterpiece,” you must strip away unnecessary moving parts and rely on high-performance cloud state. Here is my exact workbench:

- The Framework: Node.js (LTS) and Meteor + Blaze 3.0 (for zero-glue real-time reactivity).

- The Styling: Tailwind CSS. Rapid, utilitarian styling without leaving the HTML file.

- The Cloud State: MongoDB Atlas. A standard local MongoDB is insufficient. You need Atlas for Vector Search, which is mathematically required to compare user pitches against historical hackathon data.

- The Brain: Google AI Studio. I use Gemini 3.1 Flash Lite Preview and the

gemini-embedding-001model (sincetext-embedding-004is retired). - The Infrastructure: A GitHub Repo attached to Google Cloud Run via CI/CD.

- The Economy: Stripe.

With the physical workbench set, the architecture itself had to fulfill a specific product philosophy.

Dev Hunter needed five things more than anything else: real-time updates for votes, a document model for challenge entries, a secure way to call AI services, a “freshness” layer so challenges don’t rot, and a deployment path that doesn’t turn into a platform-integration exercise.

Meteor handled the first two with the least friction. Its publication/subscription model isn’t an afterthought—it’s the transport layer of the application. By bridging the server-side MongoDB collection with the client-side Minimongo cache, I get a direct feedback loop: write data on the server, and the UI reacts instantly.

For the AI side, I wanted the client to stay thin. The browser should not hold API keys, manage privileged calls, or do heavyweight processing. Meteor Methods are the right place for that work because they run server-side and integrate naturally with the rest of the application flow. When I need to call an embedding model or a summarization model, I want that to happen on the server where the logic is controlled and auditable.

For embeddings and semantic search, I chose a setup built around Google’s Gemini embedding model and MongoDB Vector Search. Google’s current docs describe the Gemini Embedding model as suited to semantic search, document retrieval, and recommendation systems, and MongoDB’s Atlas docs describe Vector Search as the mechanism for indexing embeddings and running semantic or hybrid search over them. That combination fits Dev Hunter extremely well because the product is not just storing challenge posts; it is comparing, clustering, and analyzing them.

Part 4: Step-by-Step Architecture Implementation

I think about the system in one clean chain. First, a challenge entry lands in the database. Second, a server Method validates the write and triggers any downstream processing. Third, the app creates or updates embeddings for the challenge text. Fourth, the published data updates the UI immediately for every connected user. Fifth, any AI-generated analysis is stored in a way that can be re-used, inspected, and refreshed later.

Step 1: The Zero-Glue Real-Time UI

Building a “Live Dashboard” in Next.js requires exhausting duct tape: managing WebSockets, handling state synchronization, and invalidating caches. Meteor’s native DDP (Distributed Data Protocol) handles WebSocket synchronization automatically.

- I define a MongoDB Collection on the server.

- I publish it.

- The Blaze frontend subscribes to it.

When a user clicks “Upvote” on a dev challenge, the database updates, and every connected user sees the new score instantly. Zero lines of cache-invalidation code written.

That flow is the reason I like Meteor for this project. The publication/subscription model is not an afterthought. It is the transport layer of the application. Meteor’s docs explicitly describe subscriptions as a bridge between the server-side MongoDB collection and the client-side Minimongo cache, with incremental updates arriving as the published data changes. That is the exact behavior I want for live votes, live counts, and challenge state.

Step 2: The AI-Augmented Brain (Server-Side Proxy)

For the AI side, I am not trying to make the browser clever. I am trying to make the server responsible. Google’s Gemini API supports streaming and real-time interfaces, which makes it practical to build responsive AI interactions without pushing privileged logic into the client. The point is not that the UI should do the intelligence work. The point is that the UI should receive the result cleanly and react to it.

Meteor executes heavy lifting inside secure Node.js “Meteor Methods” on the server.

- Vectorizing: When a new challenge is added, a Meteor Method calls the

gemini-embedding-001API to turn the description into a vector array, storing it directly alongside the document in Mongo Atlas. - Streaming Analysis via Tracker: Because of Blaze’s global Tracker, I can fetch external Gemini API data and drop it into a

ReactiveDict. The HTML template automatically updates the millisecond the data arrives. When I ask Gemini to analyze a winning strategy, the user’s screen types out the text in real-time without manual WebSocket management. - Security & Auth: Executing this via Server-Side Proxy eliminates Browser CORS issues and prevents leaking API keys. Furthermore, Meteor’s

accounts-baseremains the undisputed champion for attaching OAuth tokens to users, turning identity management into a one-line command.

Step 3: The Epistemic Janitor (Self-Cleaning Data)

For freshness, I treat the application like a knowledge system instead of a static database. Challenge deadlines expire. Prize amounts change. Statuses become stale. That means the app needs a scheduled review process, not just a passive content store. I want stale records flagged, not forgotten. That is a small detail, but it is the kind of detail that turns an aggregator into a reliable tool.

I implemented an automated “Epistemic Janitor.” Using Meteor’s server-side cron jobs, the system wakes up every 24 hours, scans the database for contradictions against current dates, and flags the epistemic_status of old challenges to “stale,” sending me a UI notification to verify.

Part 5: The Reality Check (What Blaze Can’t Do)

I am not always right, and neither is this stack. To be intellectually honest, we must acknowledge the invisible walls of this architecture:

- The Server-Side Rendering (SSR) Gap: Blaze is fundamentally a Client-Side Rendering (CSR) framework. Unlike React Server Components, Blaze cannot stream HTML skeletons while an API is fetching. You must download the JS bundle first. For SEO-critical, sub-second mobile loading, Blaze loses.

- Ecosystem Isolation: If Vercel drops a brilliant new AI UI component, it is built for React. In Blaze, you are writing the integration glue manually.

- Vector Isolation: Meteor’s client-side database (

minimongo) does not understand Vector Search. All vector math must be executed on the server and manually published to the client. - Hiring Difficulty: In 2026, finding a “Blaze Expert” is difficult. Most talent has moved to component-based frameworks.

The Solution: Offload heavy compute. Do not run heavy LLM text processing in the main Meteor event loop. Push the heavy AI compilation to a separate Node.js worker via GitHub Actions, and let the Meteor server act purely as a lightweight API traffic cop.

Conclusion

The lesson I learned while building Dev Hunter is not that modern tools are bad. They are not. The lesson is that over-assembled stacks create invisible maintenance costs, and those costs compound fastest when you are building alone.

For a solo developer, the best architecture is often the one you can explain in one breath: a realtime database, a server-side business logic layer, a simple reactive UI, and a clean path for AI calls that never exposes secrets to the browser. That is what Meteor + Blaze gives me. It lets me move quickly without turning the app into a pile of disconnected abstractions. It keeps the product inspectable. It keeps the codebase honest. It keeps the system close to the problem.

Dev Hunter needs to stay alive, current, and easy to reason about. That is why I chose a stack that optimizes for clarity over fashion. In 2026, that still feels like a competitive advantage.