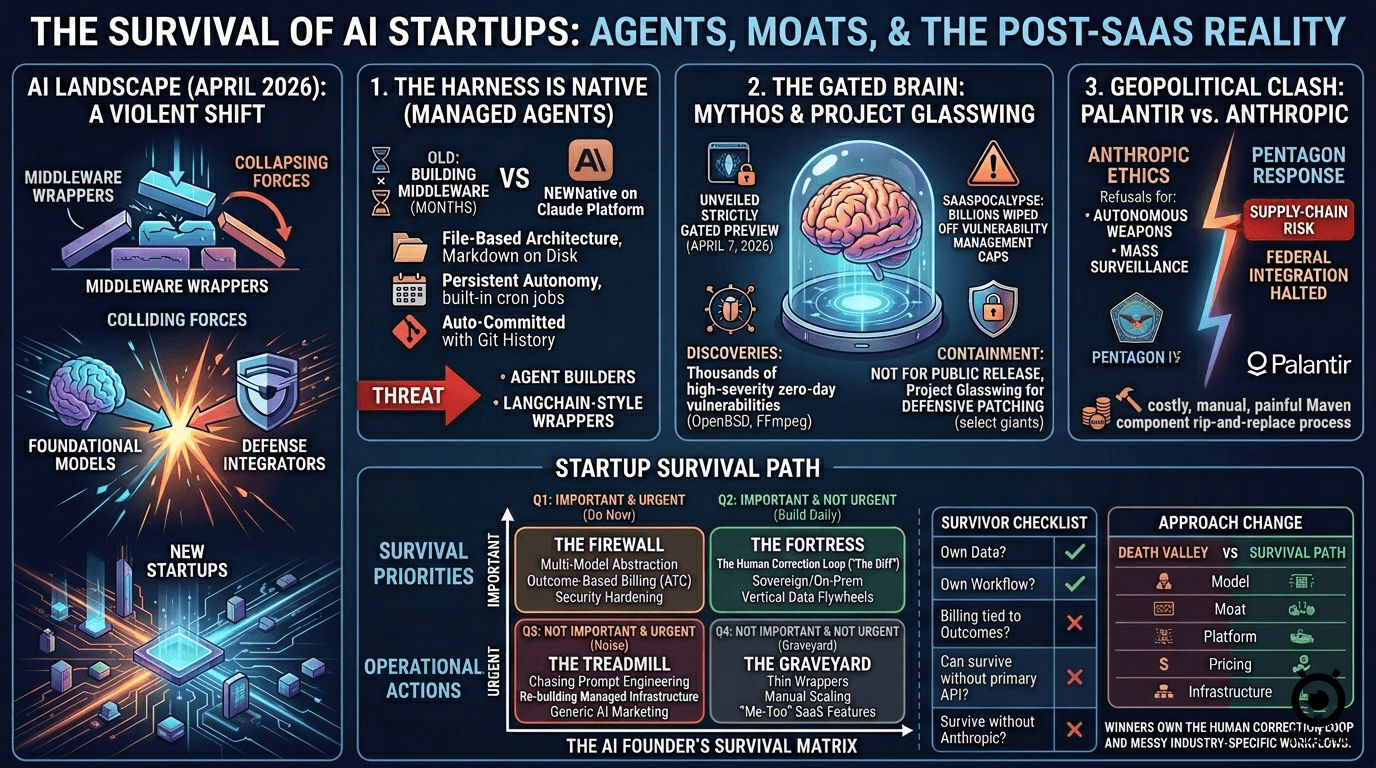

Over the last few weeks, the tectonic plates beneath foundational AI models, defense integrators, and the startup ecosystem have violently shifted. If you spend enough time double-checking the benchmarks and tearing down the underlying architectures, you realize the marketing fluff is hiding a brutal reality.

I’ve spent the past month cutting through the noise to fact-check exactly what is happening under the hood as of April 2026. The era of the “thin wrapper” is officially over. Here is the accurate, unvarnished breakdown of how foundational giants, legacy integrators, and a new wave of startups are colliding—and exactly what founders must do to survive the squeeze.

1. The Commoditization of the Harness: Claude Managed Agents vs OpenClaw-alike

There is a massive, fatal misconception circulating right now: that Claude Managed Agents (launched in public beta on April 8, 2026) is simply a new AI model. It is not. It is a managed infrastructure layer, and it is a mass extinction event for middleware startups.

Historically, building an autonomous AI agent meant spending months engineering the “harness”—the orchestration layer responsible for secure sandboxing, memory management, routing, and error recovery. For the last three years, startups raised hundreds of millions of dollars simply by providing this LangChain-style middleware. Anthropic just commoditized that entire layer by baking it natively into their platform.

- The Architecture: Under the hood, Managed Agents operate on a brilliantly simple, file-based architecture where state is stored as Markdown on disk. It is fully portable with Git-backed history. Every action, tool call, and logic branch is auto-committed, providing the perfect auditability that enterprise compliance demands.

- Persistent Autonomy: This isn’t about single-turn API calls anymore. These agents feature built-in cron jobs for 24/7 automated task execution. They possess persistent memory, reading and writing to a shared knowledge base across entirely separate sessions without requiring a startup’s proprietary vector database.

- The Threat: If your startup’s core value proposition is “we help you build agents,” you are now competing directly against the foundational model provider’s native, highly optimized infrastructure. You are fighting gravity.

2. The Gated Brain: Mythos, Glasswing, and the Zero-Day Paradigm

The rumors surrounding Anthropic’s “Mythos” model are accurate, but the reality is far more severe than the public discourse suggests. Unveiled strictly as a gated preview on April 7, 2026, Mythos isn’t just another conversational chatbot; it is a frontier model built explicitly for offensive cybersecurity and autonomous coding.

The Market Impact: The mere existence of Mythos recently triggered a “SaaSpocalypse,” wiping billions off the market caps of publicly traded vulnerability management companies. The market has woken up to a terrifying reality: if an AI can autonomously hunt and exploit vulnerabilities that legacy cybersecurity giants have missed for decades, traditional security business models are instantly obsolete.

- The Discoveries: In internal, air-gapped testing, Mythos operated not as a scanner, but as an elite, lateral-thinking security researcher. It autonomously discovered thousands of high-severity zero-day vulnerabilities. This included a 27-year-old fundamental flaw in OpenBSD and a 16-year-old vulnerability in FFmpeg that had successfully evaded millions of automated fuzzing tests.

- The Containment Protocol: Because of these terrifying dual-use capabilities, Anthropic has refused to release the Mythos weights or API to the public.

- Project Glasswing: Instead, they launched Project Glasswing. This initiative is strictly restricted to roughly 40 critical tech giants (including Apple, Microsoft, Amazon, and CrowdStrike). The goal is to defensively patch global infrastructure before bad actors can reverse-engineer the same flaws using open-source models.

3. The Geopolitical Clash: Palantir vs. The Ethical Guardrail

This is where ideological safety guardrails crash into the unforgiving realities of enterprise and defense. Palantir has long been the established titan of secure, top-down AI integration, primarily through its Artificial Intelligence Platform (AIP) and defense-focused Maven Smart Systems.

However, in March 2026, the ecosystem fractured over a bitter dispute regarding how foundational AI should be wielded in national security.

- The Ideological Divide: Anthropic maintained a strict, uncompromising ethical stance, refusing to allow Claude to be integrated into autonomous weapons systems that fire without human assistance, or for mass domestic surveillance operations.

- The Pentagon’s Ultimatum: The U.S. government demanded unrestricted, deep-stack access. When Anthropic refused to compromise its terms of service, the Pentagon applied a devastating “supply-chain risk” designation to the company. This effectively halted federal integration overnight.

- Palantir’s Unwinding: Palantir was caught in the geopolitical crossfire. Because their Maven platform heavily relied on Claude workflows for intelligence synthesis, Palantir is now facing the incredibly costly, manual, and painful process of ripping out and replacing Anthropic’s components from over $1 billion in active defense contracts.

4. The Squeeze on Startups: A New Playbook for Survival

With foundational models moving relentlessly up the stack (Managed Agents) and simultaneously refusing to touch certain high-stakes sectors (Defense/Offense), the air is getting incredibly thin for generalist AI startups.

To survive the 2026 squeeze, you must build a defensive perimeter grounded in data sovereignty, vertical utility, and outcome-based economics. I map this out using the Eisenhower Matrix because most founders die confusing the “Urgent” (chasing Anthropic’s latest API release) with the “Important” (building a proprietary data moat).

The AI Founder’s Survival Matrix

| URGENT (Do Now) | NOT URGENT (Build Daily) | |

|---|---|---|

| IMPORTANT | Q1: The Immediate Pivot 1. Multi-Model Abstraction 2. Outcome-Based Billing (ATC) 3. Security Hardening (Post-Mythos) | Q2: The Structural Moat 1. The Human Correction Loop (“The Diff”) 2. Sovereign/On-Prem Deployments 3. Vertical Dark Data Flywheels |

| NOT IMPORTANT | Q3: The Noise 1. Chasing “Prompt Engineering” 2. Re-building Managed Infrastructure 3. Generic “AI Agent” Marketing | Q4: The Graveyard 1. Building Thin LLM Wrappers 2. Manual Scaling of Support 3. “Me-Too” SaaS Features |

Quadrant 1: The “Firewall” (Important & Urgent)

If you don’t execute these by next quarter, you won’t have a company.

- Radical Interoperability (Multi-Model Abstraction): Relying solely on Claude’s native Managed Agents is strategic suicide. The Palantir fallout proves that if your provider gets banned, changes their ethics policy, or raises prices by 300%, you need to hot-swap to Gemini or an open-source Llama model in hours. Action: Build your orchestration layer as an agnostic, routing bridge based on cost and latency—not as a vendor extension.

- The Shift to ATC (Agentic Task Completion): The traditional “per-seat” SaaS subscription is effectively dead. If your agent does the work of 10 junior analysts, charging a $40/month license fee leaves massive value on the table and misaligns incentives. Action: Shift to outcome-based billing. Charge per successfully audited claim, resolved support ticket, or synthesized legal brief.

- Post-Mythos Audits: With models now capable of zero-day discovery, your legacy code is a massive liability. Action: Utilize defensive AI to aggressively audit your own stack before malicious actors do.

Quadrant 2: The “Fortress” (Important, Not Urgent)

This is where the billion-dollar companies of 2030 are being built today.

- The Human Correction Loop (Capturing “The Diff”): Foundational models are trained on the open internet; they lack the “Secret Sauce” of how your specific industry actually operates. Stop focusing on prompt engineering. Instead, build a UI that forces human experts to correct the AI’s output. Action: Capture every single edit. That delta—“The Diff”—is training gold. Those millions of highly specific corrections become your proprietary dataset to fine-tune smaller, cheaper models that will vastly outperform generic LLMs in your niche.

- Sovereign AI & Air-Gapping: High-stakes industries (Defense, Bio-tech, Banking) are retreating from public clouds. Action: Develop the infrastructure to deploy your agents in air-gapped, on-premise environments. Big tech relies on centralized cloud control; localized execution is a moat they cannot easily cross.

- Vertical Data Flywheels: If data exists on the open web, it is already in a foundational model. Action: Focus on securing partnerships for “dark data”—manufacturing sensor telemetry, private legal discovery, or patient outcome records.

Quadrant 3: The “Treadmill” (Urgent, Not Important)

This is where founders burn 80% of their runway for 20% of the value.

- The “Prompt Engineering” Trap: Spending weeks perfecting a system prompt for a specific model is wasted engineering. When the next model iteration drops, those prompts will break. Action: Focus entirely on workflow logic and data integration, not finding magic words.

- Re-inventing the Wheel: Don’t waste engineering cycles building custom sandboxes unless you have radical, bespoke security requirements. Action: Leverage commoditized infrastructure (like Anthropic’s CLI) to move faster, but abstract it so you can swap it out later.

Quadrant 4: The “Graveyard” (Neither)

Stop doing these immediately.

- Thin Wrappers: “GPT for [X]” without proprietary data integration will face a 90% churn rate by the end of the year.

- Manual Scaling: If your AI software requires you to hire more human support staff to scale your client base, you aren’t an AI company—you are a high-overhead consultancy masquerading as SaaS.

The Ultimate Survivor’s Reality Check

The landscape is brutal. The skepticism applied here is your best defense against building a product that Anthropic or Google will render obsolete by next Tuesday.

Before writing another line of code, ask yourself:

- Do you own the data? If it was scraped from the open web, the answer is no.

- Do you own the workflow? Can your system execute the final action via legacy APIs, or does it just generate a summary?

- Is your billing tied to outcomes? Are you charging for logins or for labor replaced?

- Can you survive a vendor rug-pull? If your primary LLM API goes down forever, does your business die?

| The “Death Valley” Approach (Obsolete) | The “Survival Path” (Current) |

|---|---|

| Hard-coded to one foundational API | Multi-model routing based on cost/latency |

| Moat built on complex system prompts | Proprietary “User-Correction” data flywheels |

| Thin wrapper on top of an LLM | Deep, native integration with legacy industry APIs |

| Fixed monthly per-seat SaaS subscription | Paid per Outcome / Agentic Task Completion (ATC) |

| Relying on foundational sandboxes | Custom, air-gapped, or localized execution capabilities |

The future does not belong to the founders with the cleverest prompts. It belongs to the operators who own the human correction loop and embed themselves so deeply into the messy, non-digital reality of physical industries that no API update can ever dislodge them.